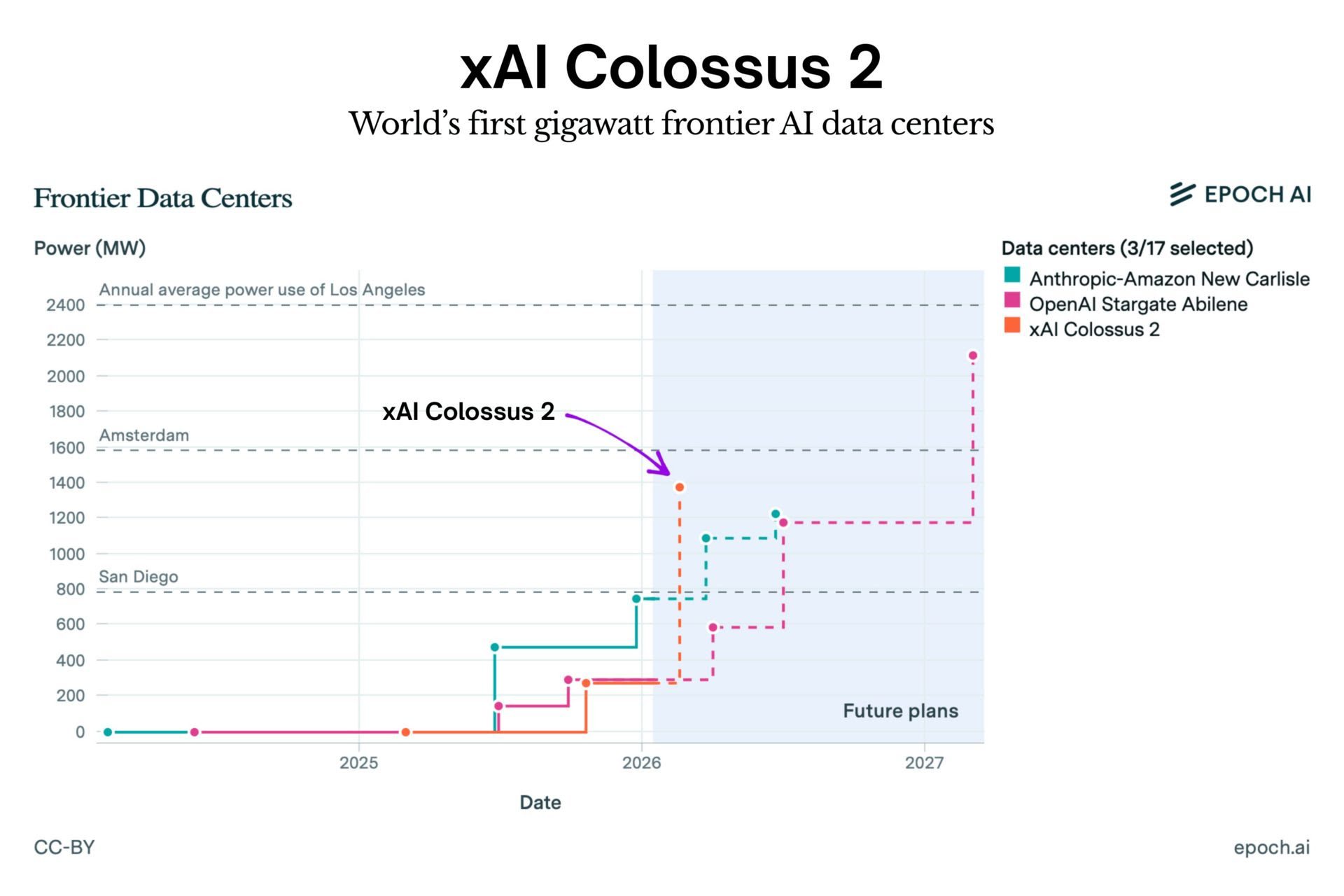

xAI has officially switched on Colossus 2, a record-breaking AI training cluster consuming one gigawatt of power. That is more electricity than the entire city of San Francisco uses at peak times. As the first operational system of this scale, it allows xAI to train massive frontier models at a single location rather than splitting the workload across different data centers. The facility is expected to scale up to 1.5 gigawatts by April, with plans to reach 2 gigawatts soon after. The system is powered by approximately 555,000 GPUs, representing an estimated hardware investment of $18 billion.