OpenAI has introduced GPT-5.1-Codex-Max, a major upgrade to its coding-focused model lineup and a clear push toward long-horizon, agentic software development. Built on top of OpenAI’s advanced reasoning model, Codex-Max is designed not just to generate code but to interpret requirements, plan multi-step tasks, and operate across full development workflows. It essentially functions as a high-bandwidth engineering partner capable of staying coherent over extremely long sessions.

One of the model’s defining capabilities is compaction, a compression method that lets it preserve critical context even as token limits approach their ceiling. This allows Codex-Max to work across projects that span millions of tokens—refactoring large codebases, debugging multi-file issues, or running extended agent loops—without losing track of earlier decisions. OpenAI notes that the model was tested on realistic engineering tasks, including pull request generation, code reviews, front-end development, and in-depth Q&A, and consistently outperformed previous versions. With new Windows-trained datasets added to its training set, it’s also more versatile across operating systems.

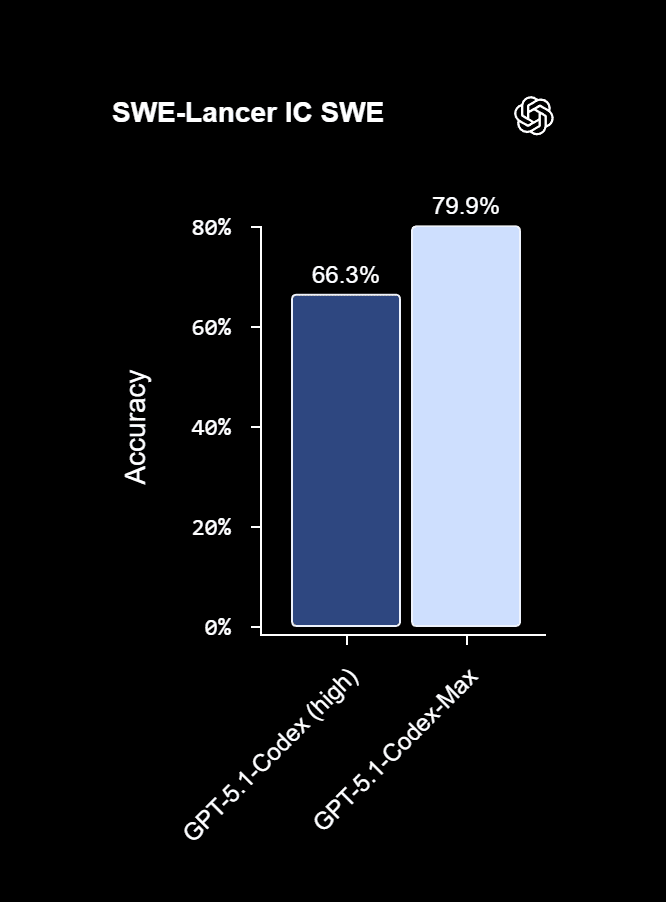

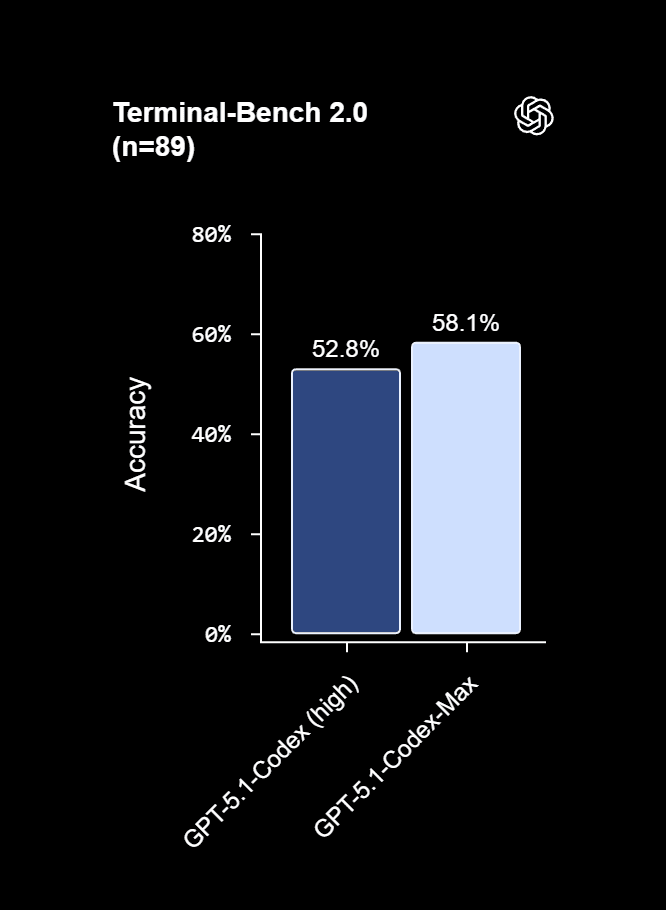

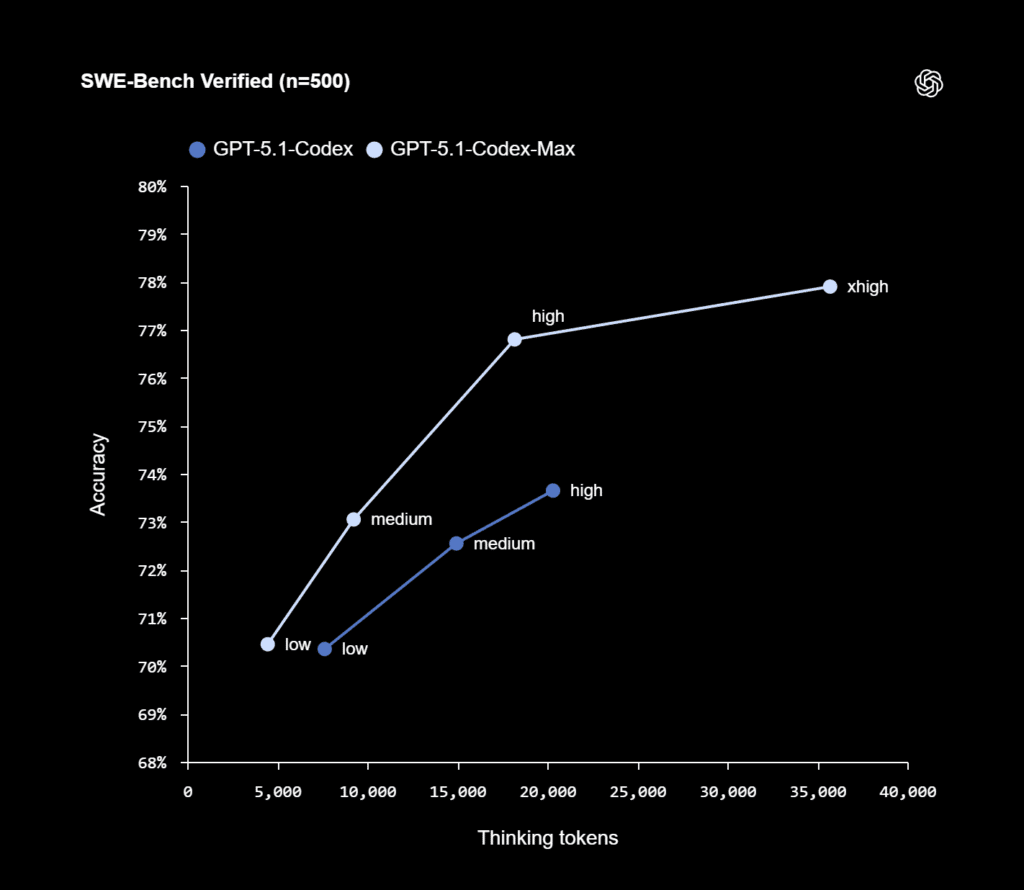

Efficiency is a major focus. At medium reasoning effort, Codex-Max delivers the same or better quality as GPT-5.1-Codex while using nearly a third fewer reasoning tokens. For developers who don’t need instant responses, a new “extra-high” reasoning mode lets the model think longer and deliver even stronger results—ideal for complex refactoring, architecture changes, or research-heavy tasks. In practice this means higher accuracy, lower cost, and more reliable long-form performance.

OpenAI reports that Codex-Max can sustain work for over 24 hours in continuous sessions. During tests, the model refactored an entire CLI repository, repeatedly compacting and reprioritizing context whenever necessary. Instead of stalling at the token limit, it prunes intelligently, keeps the essential logic, and moves forward as if it were an engineer with a running mental model of the entire project.

Given the risks associated with long-running agentic systems, OpenAI has built in guardrails. Codex-Max still falls short of the “High” safety maturity tier in the company’s Preparedness Framework, but it performs strongly on long-horizon evaluations and cybersecurity tests. By default, it runs inside a sandbox with restricted file writes and no external internet access unless explicitly enabled. Every tool call, terminal action, test result, and reasoning step is logged for transparency, making it easier for developers to review and intervene. OpenAI repeatedly stresses that human oversight is still required, even as the model takes on more autonomous workflows.

Codex-Max is available today across the CLI, IDE extensions, cloud environments, and OpenAI’s code review workflow. It replaces GPT-5.1-Codex as the default model and is accessible to users on ChatGPT Plus, Pro, Business, Edu, and Enterprise tiers. API access is not yet available but is planned for a future release.

With Codex-Max, OpenAI is signaling its next major direction: AI agents that behave less like autocomplete tools and more like persistent engineering collaborators. By combining long-horizon reasoning, deep code comprehension, and multi-platform support, the model pushes AI development closer to end-to-end autonomous workflows.