Tidbit Archive

-

How Electric Fields Turn Neutral Molecules into Dipoles

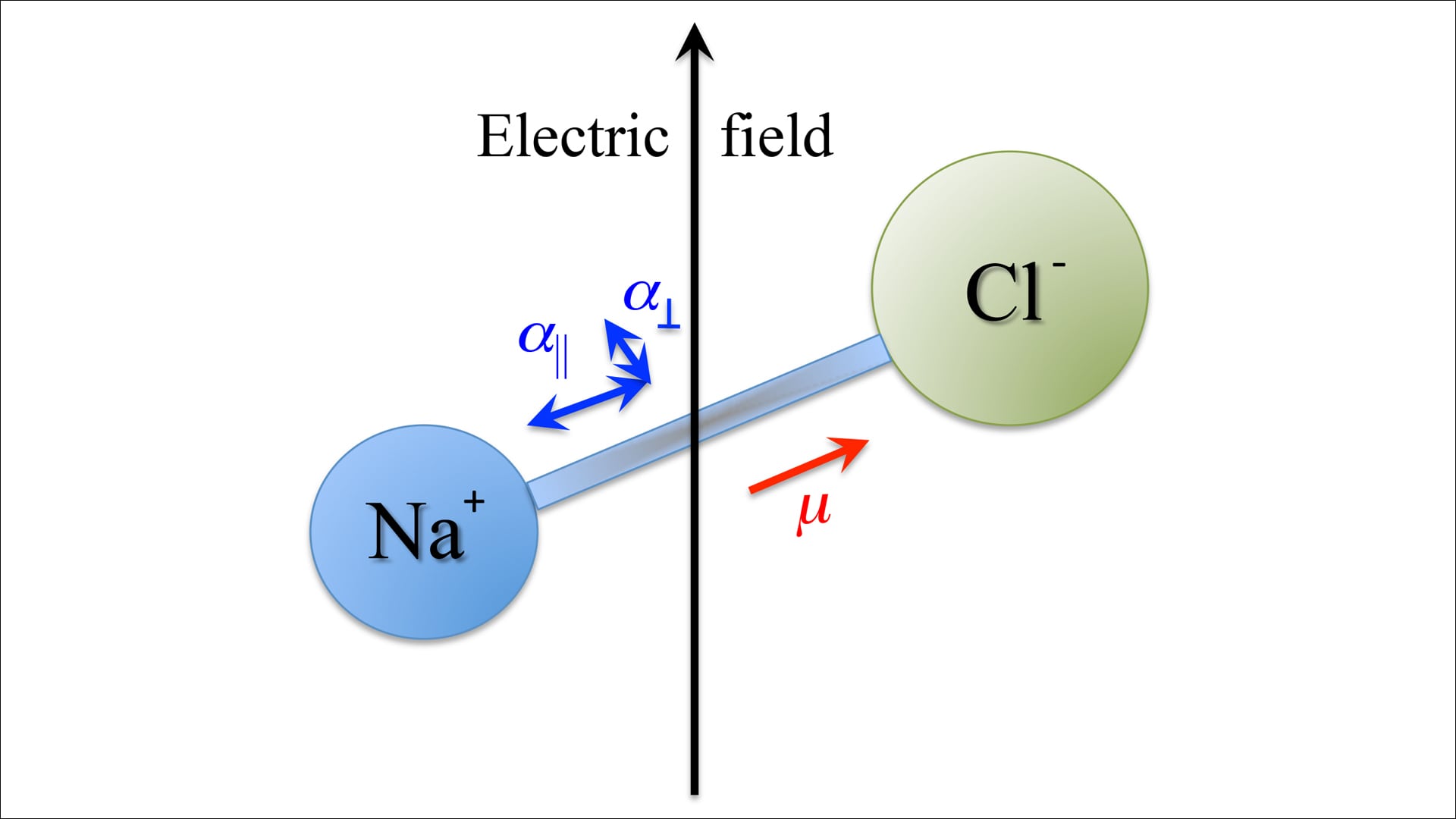

When you put a substance that does not have an electric charge in a uniform electric field it starts to get polarized. This happens because the charges inside its molecules get a bit mixed up. Usually without any field these molecules do not have a dipole moment because their positive and negative charges are centered in the same spot.

When an electric field is applied the negatively charged electrons move a bit in the opposite direction of the field while the positively charged nucleus moves in the direction of the field. This small movement creates a temporary dipole moment in the molecule.

For electric fields this temporary dipole moment is directly related to the strength of the applied field and can be written as

p = \alpha E

where \alpha is a measure of how easily the molecule can be polarized.

In a sample many molecules go through this effect leading to an overall polarization. This is described by the polarization vector P, which’s the dipole moment per unit volume. How much polarization happens depends on how strong the electric field’s how easily the molecules can be polarized.

The electric field is the same so the forces on the positive and negative charges cancel each other out. As a result there is no force on the molecule and it does not move as a whole. Only the charges, inside the molecule get rearranged.

So in an electric field a non-polar substance temporarily gets dipole moments and becomes polarized but it does not experience any overall motion because of the electric field.

-

Apple’s new MacBook Neo targets a cheaper MacBook tier

Apple has introduced the MacBook Neo as a new entry-level addition to its laptop lineup, aimed at expanding access to the macOS ecosystem. Positioned below the MacBook Air, the device focuses on delivering core MacBook features at a more affordable price point while maintaining the design language and efficiency Apple laptops are known for. The MacBook Neo uses Apple’s modern chip architecture, allowing it to provide strong battery life and efficient performance for everyday tasks such as browsing, productivity work, and media consumption. While it does not target professional workloads like the MacBook Pro, it offers enough power for students and casual users who want a reliable macOS laptop without the premium pricing. The launch reflects Apple’s strategy to widen its hardware ecosystem and attract new users who may have previously chosen mid-range Windows laptops or Chromebooks. By lowering the entry barrier to macOS, the MacBook Neo could help bring more users into Apple’s broader ecosystem of devices and services.

-

Meta just validated a smaller AI cloud bet

Nebius, a Dutch cloud provider most people have never heard of, just secured a deal worth up to $27 billion from Meta — $12 billion in dedicated AI capacity with up to $15 billion more in additional compute over five years, partly built on NVIDIA’s Vera Rubin chips, sending the stock up 14%. What makes the deal significant is not the size alone but what it represents: a named hyperscaler customer with a hard multiyear commitment, the kind of anchor contract that transforms an infrastructure story from promising to credible. It also arrives in the context of Meta planning up to $135 billion in AI capital expenditure this year, suggesting the company is deliberately spreading its infrastructure bets beyond the usual cloud giants. If a player like Nebius can land at this scale, smaller providers now have a visible path to relevance — provided they can secure chips and deliver capacity reliably. The open question is whether this signals a broader structural shift in how hyperscalers procure AI infrastructure, or whether Nebius simply caught Meta at the right moment with the right offer.

-

AI labs are getting closer to weapons risk

Anthropic has posted a role for a policy manager specializing in chemical weapons, high-yield explosives, and dirty bombs, tasked with preventing catastrophic misuse of its models — and OpenAI has listed a similar position focused on biological and chemical risks, with compensation reportedly as high as $455,000. The hiring signals that the major labs now consider weapons-grade threat scenarios operational concerns serious enough to warrant specialists drawn from defence and weapons fields, not generic safety teams. The uncomfortable subtext is that the models have grown capable enough to make this necessary while the regulatory frameworks governing their most sensitive use cases remain thin. There is something clarifying about this moment: when AI companies need in-house chemical weapons experts to stress-test their guardrails, the conversation has moved well past theoretical risk. Governments simultaneously pulling these tools toward military applications widens the gap between the safety language labs use publicly and the reality of how the technology gets deployed. The unresolved tension — whether the line gets drawn first by the labs, the military, or the law — is no longer an abstract policy question. It is a race with real consequences.

-

NVIDIA is packaging the agent era

At GTC, NVIDIA launched its Agent Toolkit — a bundle of open models, runtimes, and tools for building autonomous agents — signaling a deliberate move up the stack from chip maker to enterprise AI infrastructure provider. The toolkit includes OpenShell for policy, network, and privacy guardrails, while its AI-Q blueprint claims to top DeepResearch Bench and cut query costs by over 50% by splitting orchestration between frontier and Nemotron open models. The presence of Adobe, Salesforce, SAP, Cisco, and ServiceNow in the same release reads less like a demo and more like a coordinated land grab for the enterprise control layer. Stripped of the hype, much of this is middleware, security tooling, and workflow plumbing — unglamorous infrastructure where adoption tends to be slow, but where winners are ultimately chosen by trust, integration depth, and cost rather than model quality alone. The real question NVIDIA is quietly answering is not whether agents will become standard at work, but who gets to own the logic that governs how they behave when they do.

-

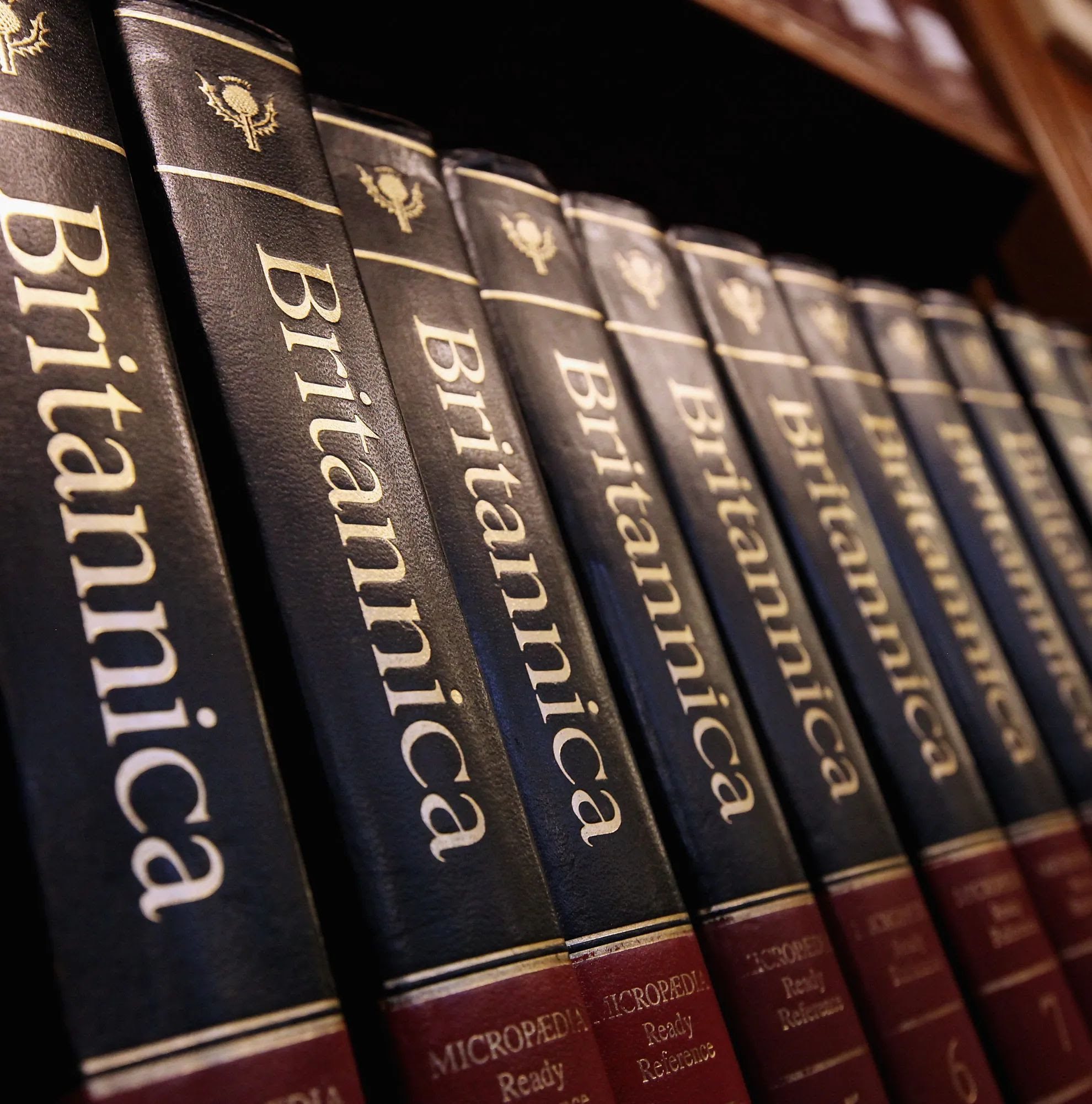

Britannica’s lawsuit shows the AI battle is shifting

Encyclopedia Britannica and Merriam-Webster have sued OpenAI in a case that sharpens one of AI’s most consequential legal debates. Britannica alleges OpenAI trained its models on nearly 100,000 copyrighted articles without permission, reproduces that material in outputs, and embeds Britannica content inside retrieval-based systems — while also arguing that false answers wrongly attributed to its brand cause trademark and reputational harm beyond copyright alone. The lawsuit joins a growing legal wave from publishers including The New York Times and Ziff Davis, all pressing a question courts have yet to fully resolve: whether training on copyrighted material is infringement or protected transformation. What makes this case feel weightier than most is who is suing — Britannica is not just a content business but a symbol of organized, authoritative knowledge, and its entry into litigation reframes the debate from technical fair use arguments to a blunter challenge: can AI companies build commercial products at scale by absorbing other people’s work without permission, payment, or accountability?

-

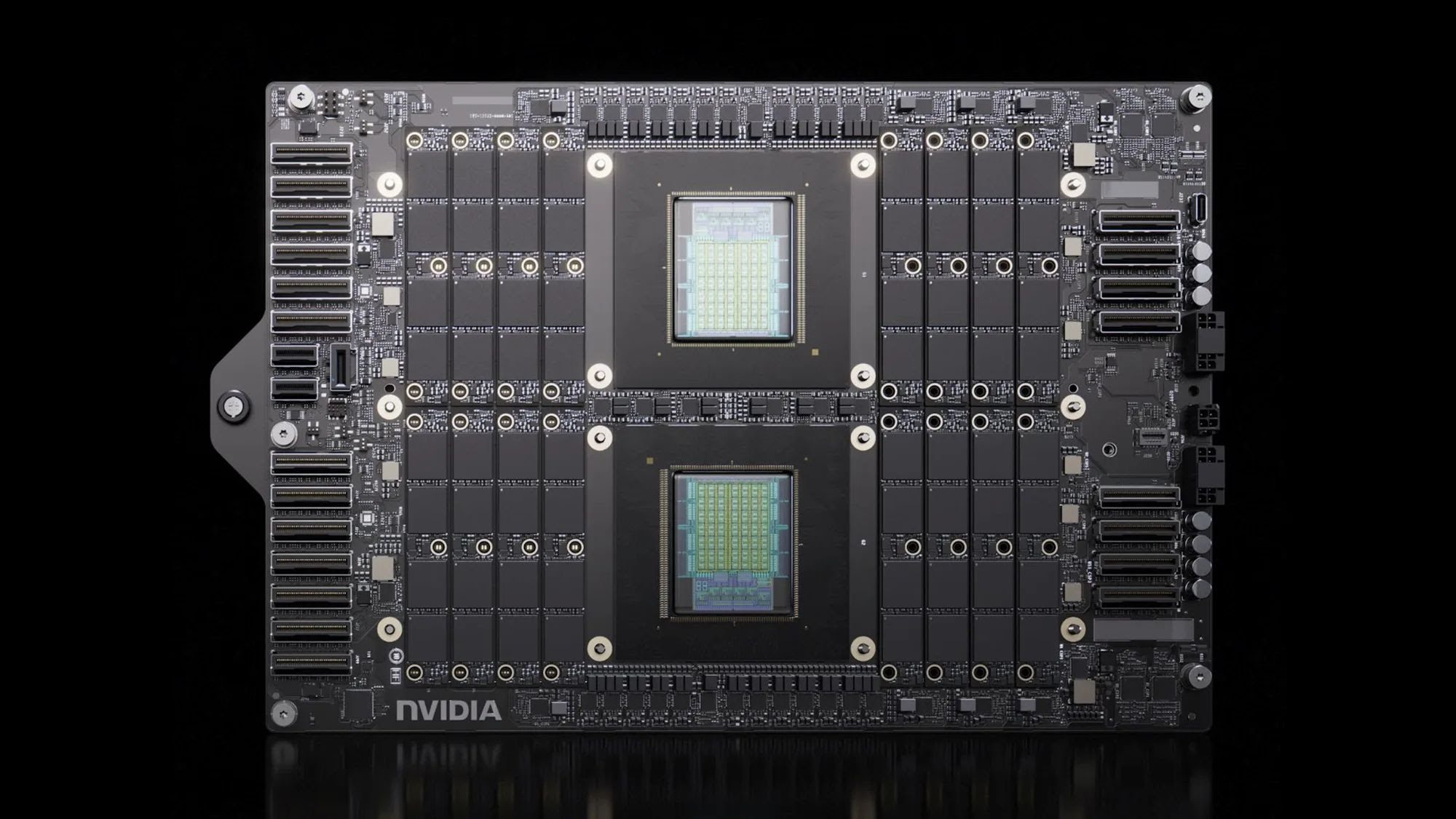

Vera shows NVIDIA wants to own the system

NVIDIA’s Vera CPU, unveiled at GTC, is less a chip launch and more a strategic claim on the future of AI infrastructure. Built specifically for agentic AI and reinforcement learning, Vera promises 50% faster performance and twice the efficiency of traditional rack-scale CPUs — gains that matter because agentic AI demands far more than model inference; it requires coordinating tools, data, code, and orchestration at scale. Vera is designed to integrate tightly with NVIDIA’s full stack — Rubin GPUs, NVLink-C2C, BlueField DPUs, and ConnectX networking — turning it into a building block of a broader AI factory architecture already attracting cloud providers, server makers, and companies like Cursor and Redpanda. Having won the GPU era, NVIDIA is now moving to define the operating logic of the agentic era, betting that the next AI race won’t just be about acceleration, but about orchestration built into the machine itself.

-

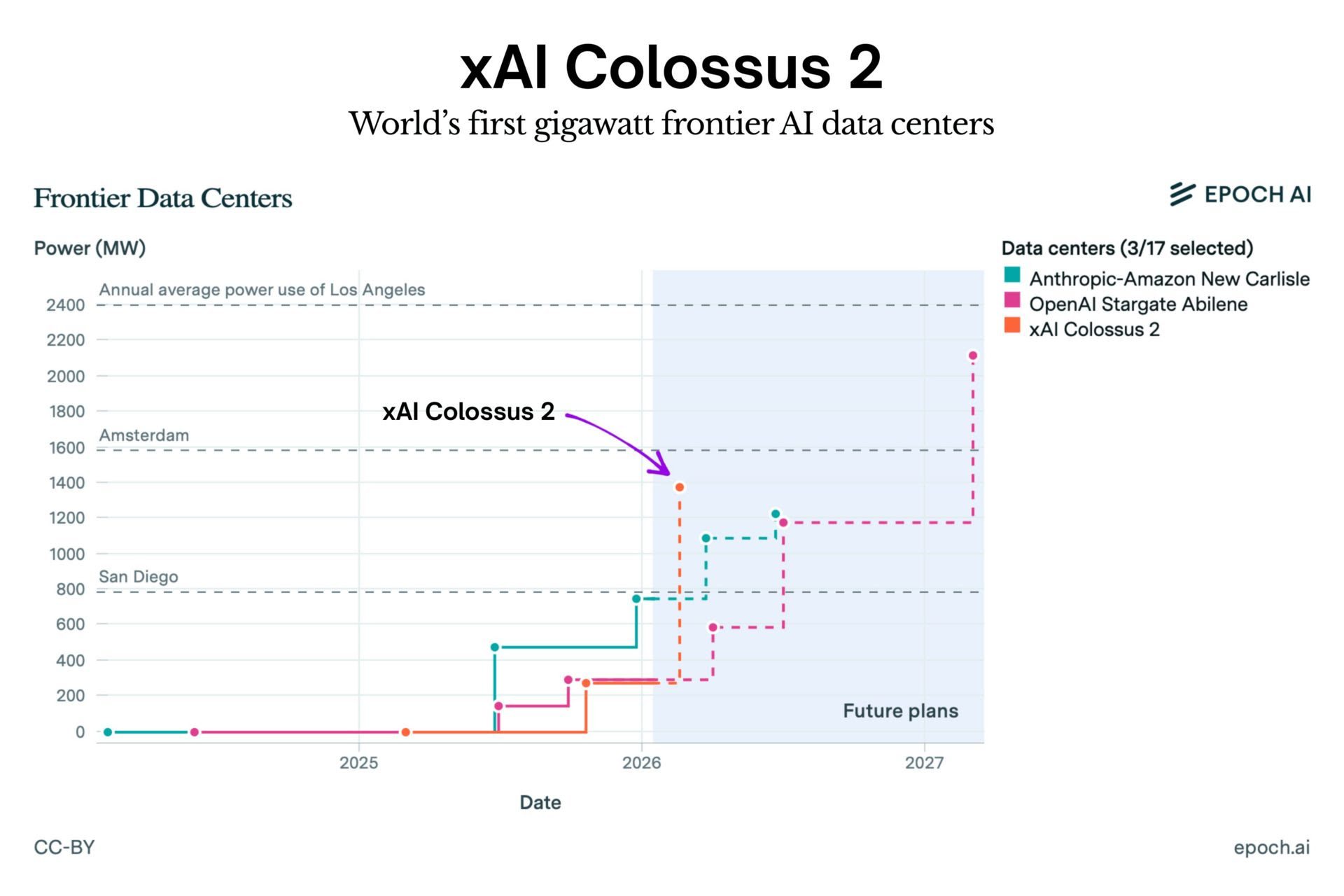

xAI powers up Colossus 2, the world’s largest single-site cluster

xAI has officially switched on Colossus 2, a record-breaking AI training cluster consuming one gigawatt of power. That is more electricity than the entire city of San Francisco uses at peak times. As the first operational system of this scale, it allows xAI to train massive frontier models at a single location rather than splitting the workload across different data centers. The facility is expected to scale up to 1.5 gigawatts by April, with plans to reach 2 gigawatts soon after. The system is powered by approximately 555,000 GPUs, representing an estimated hardware investment of $18 billion.

-

OpenAI confirms its first hardware device arrives in 2026

OpenAI is officially “on track” to launch its first AI hardware device in 2026. Executive Chris Lehane confirmed at Axios House Davos that the company is shifting focus from experimental concepts to stable, everyday consumer products. He described the upcoming hardware as a more natural way to interact with AI, moving away from reliance on traditional screens like phones and laptops. This timeline aligns with recent comments from CFO Sarah Friar regarding 2026 as a pivotal year for monetization. It also fits CEO Sam Altman’s vision to define a new category of AI-first devices, developed in collaboration with former Apple design chief Jony Ive.

-

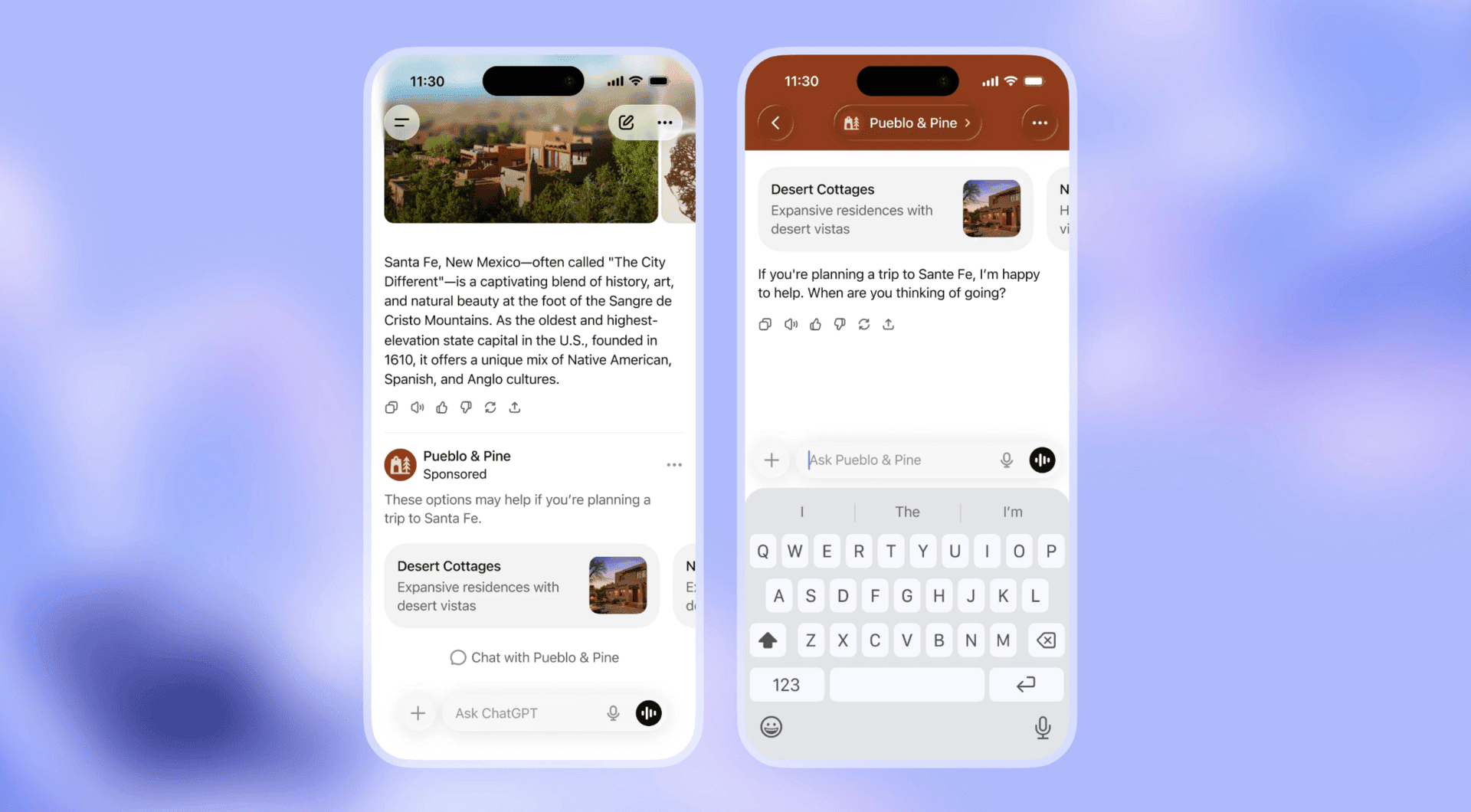

OpenAI officially introduces ads to ChatGPT

OpenAI has begun testing ads for free users in the US, offering increased usage limits in exchange for viewing sponsored content. At the same time, the company is expanding “ChatGPT Go” globally. This is an $8/mo plan that includes ads but unlocks extra features, while Plus and Enterprise tiers remain ad-free. OpenAI assures users that ads will be clearly labeled, won’t influence generated answers, and won’t rely on sold user data. Additionally, ads will not be shown to minors or appear alongside sensitive topics like health or politics. While the move is designed to fund broader access to advanced AI, critics warn it could compromise user trust if the chatbot begins promoting products too aggressively.