Google DeepMind just unveiled something pretty wild: an AI agent called SIMA 2 that doesn’t just follow instructions in video games—it actually thinks, learns from its mistakes, and can figure things out on its own. And yeah, you can literally instruct it with emojis.

Last year, DeepMind introduced SIMA 1, which could follow basic commands like “climb the ladder” or “open the door” across different games. Pretty cool, but it was basically a very obedient robot with a success rate of only 31% for complex tasks compared to 71% for humans. Not exactly impressive.

SIMA 2 changes everything by plugging in Gemini, Google’s flagship AI model. According to Joe Marino, a senior research scientist at DeepMind, this is “a step change and improvement in capabilities.” The agent can now understand what you’re actually trying to achieve, explain its own thought process, and adapt to situations it’s never seen before. Internal tests show it nearly doubled its performance from the original version. As one researcher put it, interacting with SIMA 2 is “less like giving it commands and more like collaborating with a companion who can reason about the task at hand.”

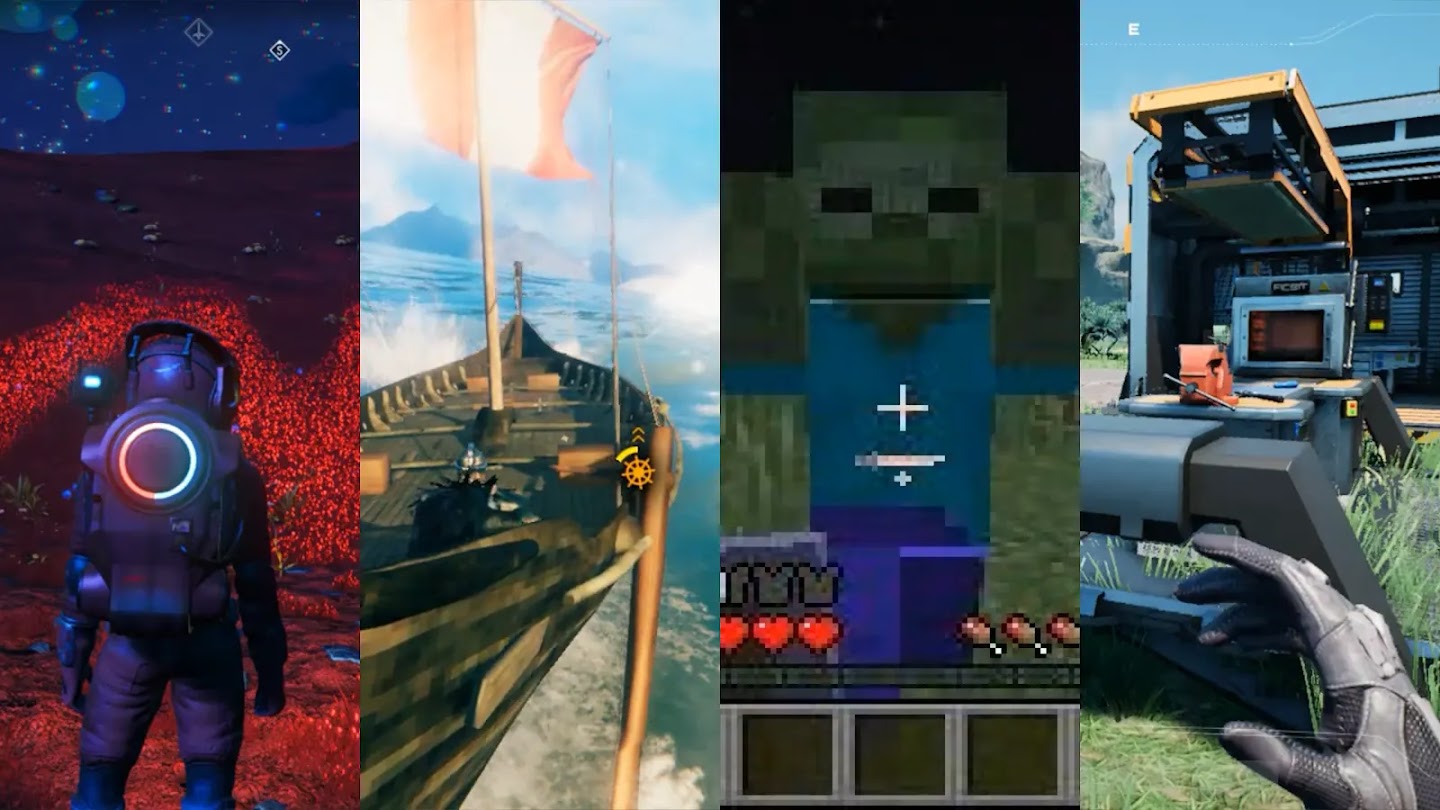

SIMA 2 was trained on commercial games like No Man’s Sky, Goat Simulator 3, Valheim, and Satisfactory. But here’s where it gets fascinating: the team combined it with another DeepMind project called Genie 3, which generates brand new 3D worlds from text prompts or images. They dropped SIMA 2 into these completely novel, AI-generated environments—worlds it had absolutely never encountered before. And it just figured it out.

It oriented itself, understood the instructions, and started taking meaningful actions. In one test, someone told SIMA 2 to “go to the house that is like a ripe tomato.” The agent understood the metaphor, identified red-looking structures, and headed toward them. That’s not just pattern matching—that’s actual reasoning.

Here’s probably the most exciting part: SIMA 2 teaches itself. When the agent enters a new environment, another Gemini model creates new challenges for it to solve. A separate reward model scores how well SIMA 2 performs. The agent uses these self-generated experiences as training data, learning from its failures and gradually getting better—exactly like a human would through trial and error.

Because SIMA 2 is powered by Gemini’s multimodal capabilities, you can communicate with it in some pretty unconventional ways. Marino demonstrated this: “You instruct it 🪓🌲, and it’ll go chop down a tree.” Text, voice, sketches, emojis—it processes them all. The agent can also explain itself, describing its intentions and walking you through the steps it plans to take.

While watching an AI master Goat Simulator 3 is entertaining, DeepMind’s endgame is much bigger. Frederic Besse, a senior staff research engineer, told media that the ultimate goal is developing AI agents for robots operating autonomously in real-world environments. The skills SIMA 2 is learning—navigation, using tools, planning multi-step actions, collaborating with humans—are exactly what you’d need for a robot working in a warehouse or factory.

DeepMind is upfront about SIMA 2’s limitations. The agent still struggles with really long, complex tasks requiring extensive multi-step reasoning. Its memory is limited, and controlling games through simulated keyboard and mouse inputs remains imprecise. These aren’t small problems—they highlight just how far we are from truly general-purpose AI.

SIMA 2 is currently just a research preview, available only to a small group of academics and game developers. There’s no timeline for broader release. But the implications are clear: we’re moving toward AI that can see, understand, and act in three-dimensional spaces with genuine reasoning capabilities. The path from a virtual companion in No Man’s Sky to a helpful robot assistant is long, but projects like SIMA 2 are laying the groundwork, one video game at a time.